Run:ai on AWS — webinar notes (inference & autoscaling)

Conference

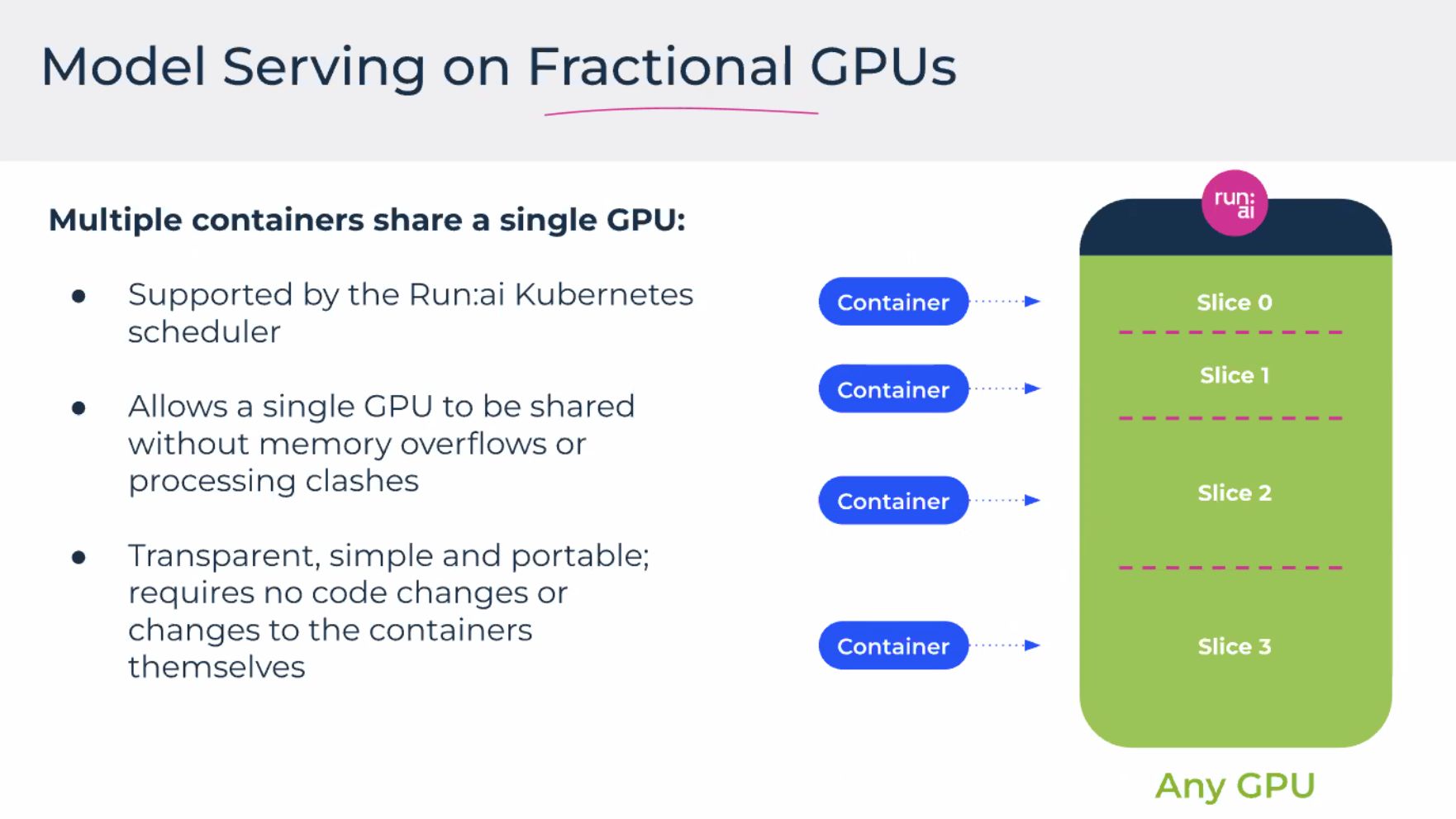

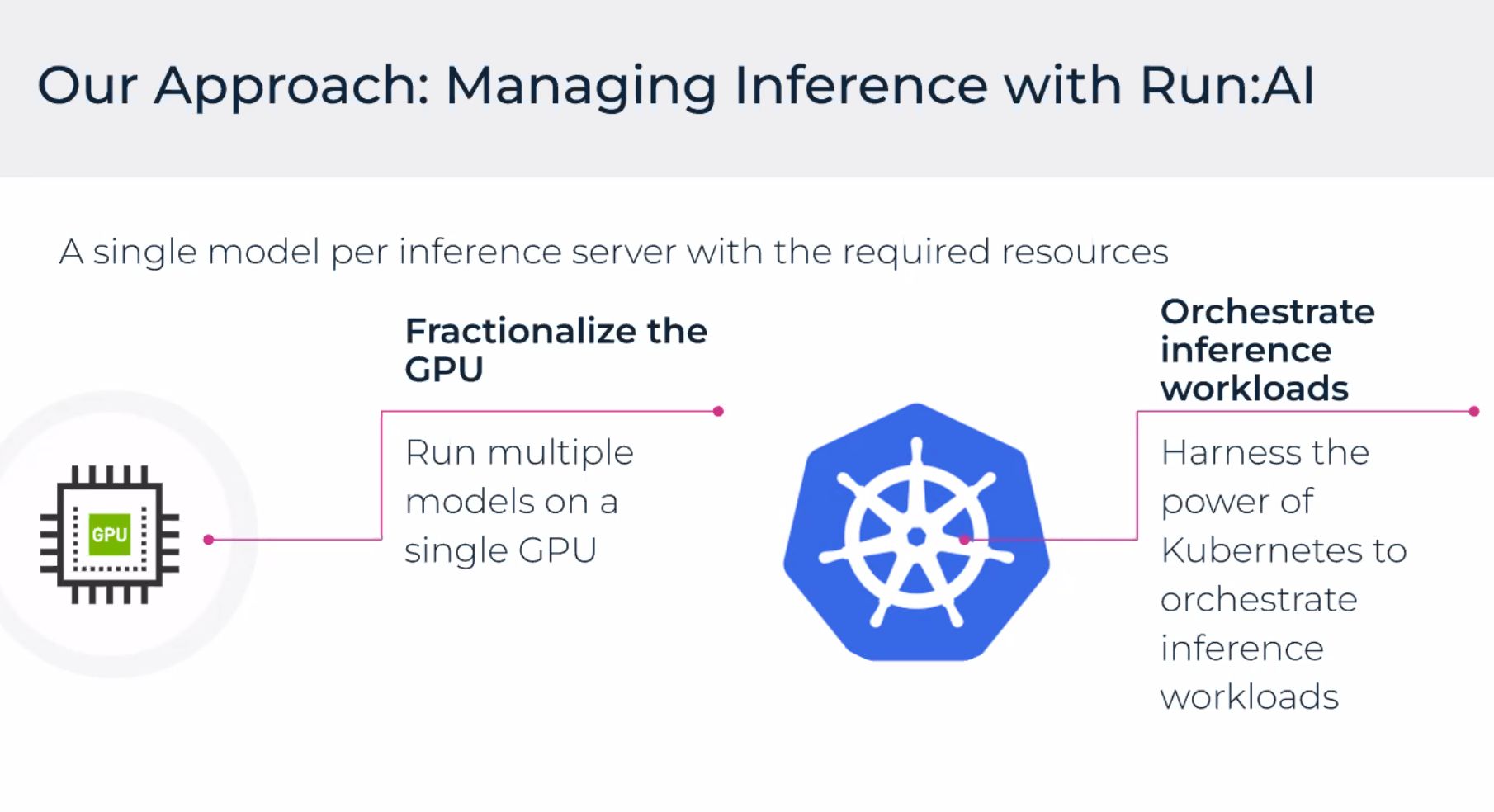

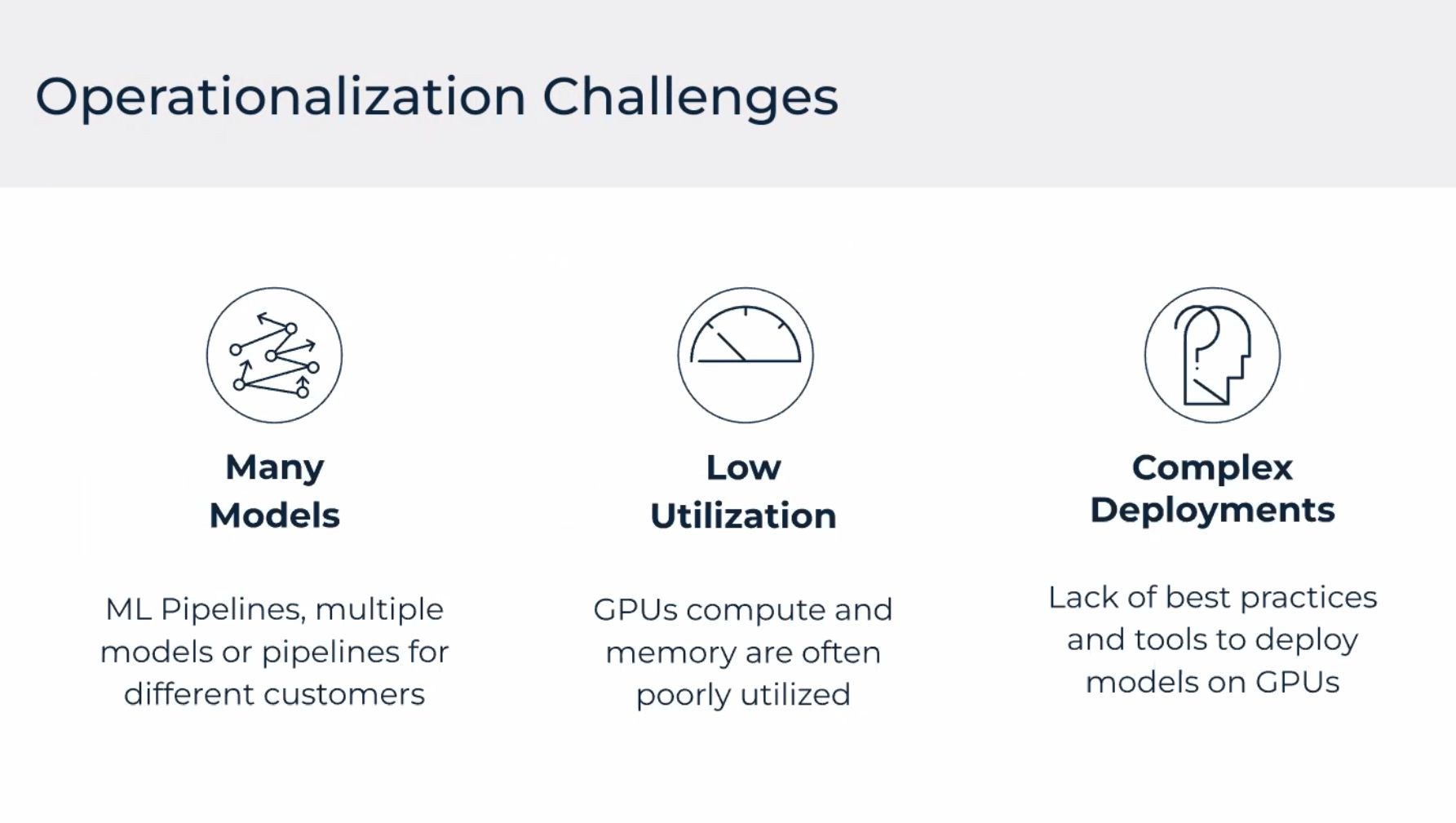

Notes from the Run:ai webinar on running and scaling inference workloads on AWS (Americas). Run:ai focuses on scheduling, visibility, and efficiency for GPU-backed models in shared environments.

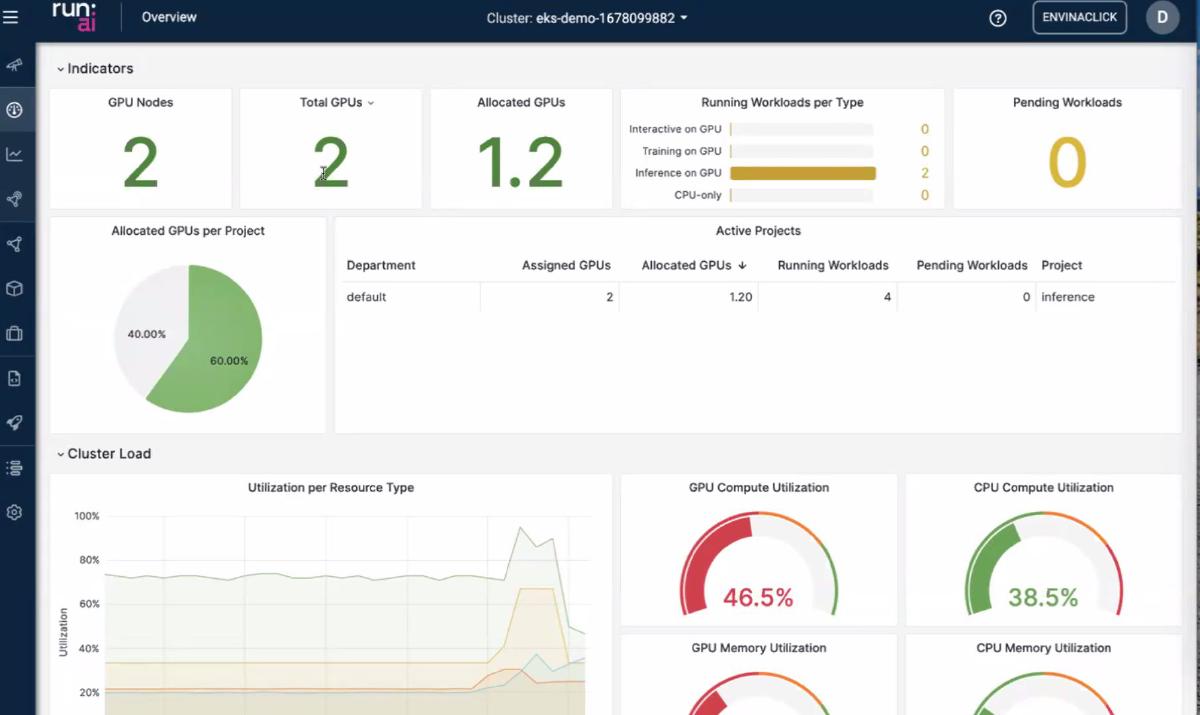

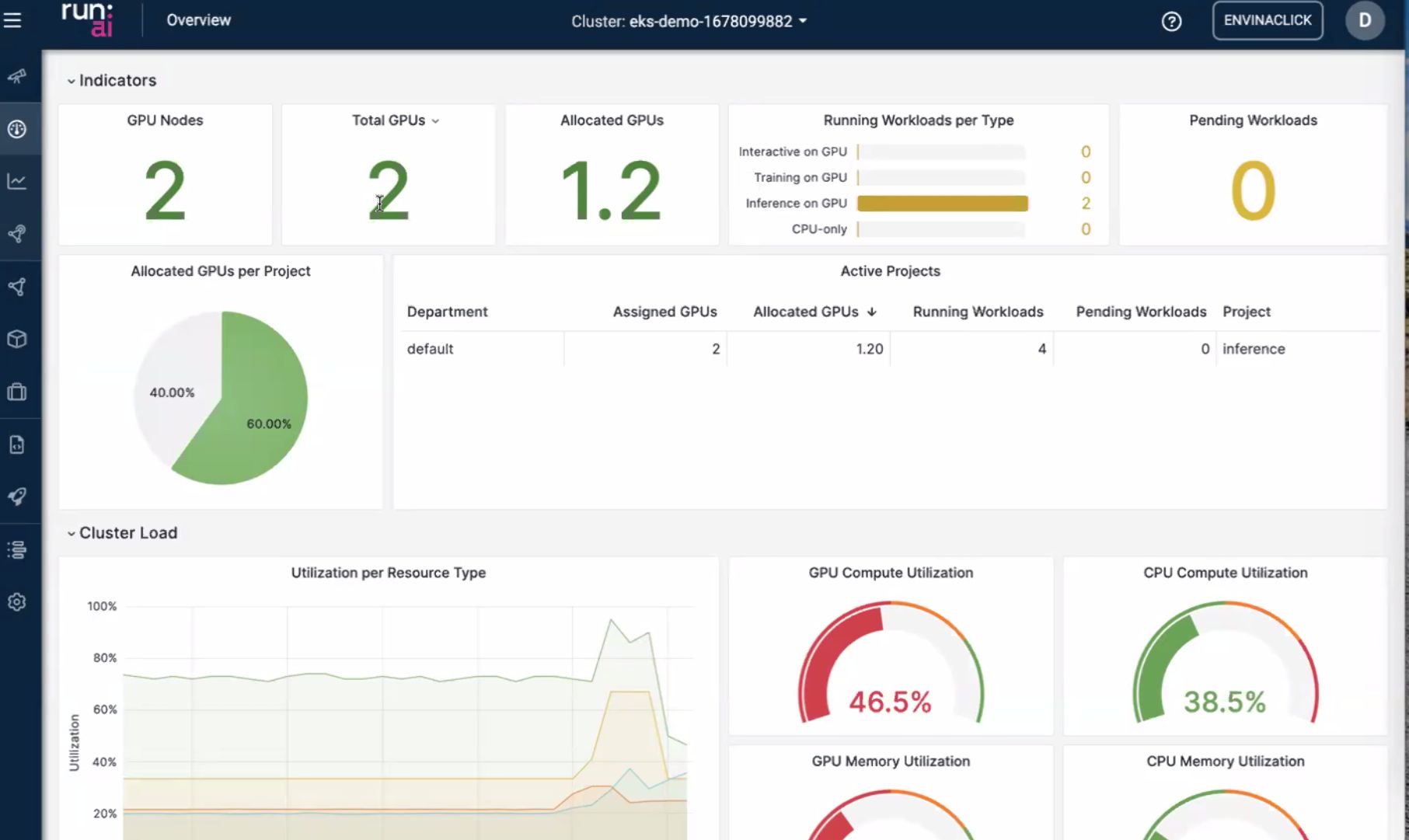

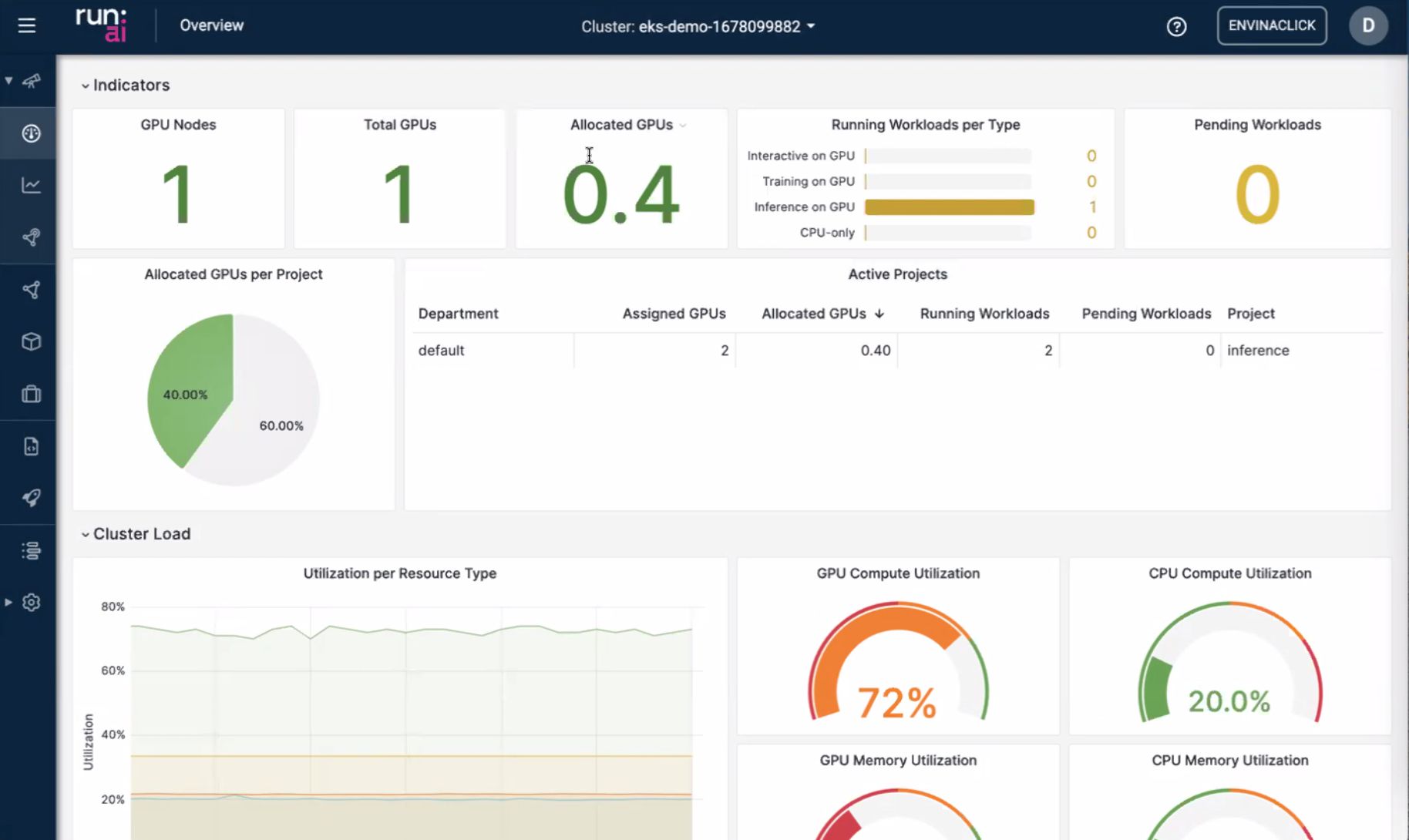

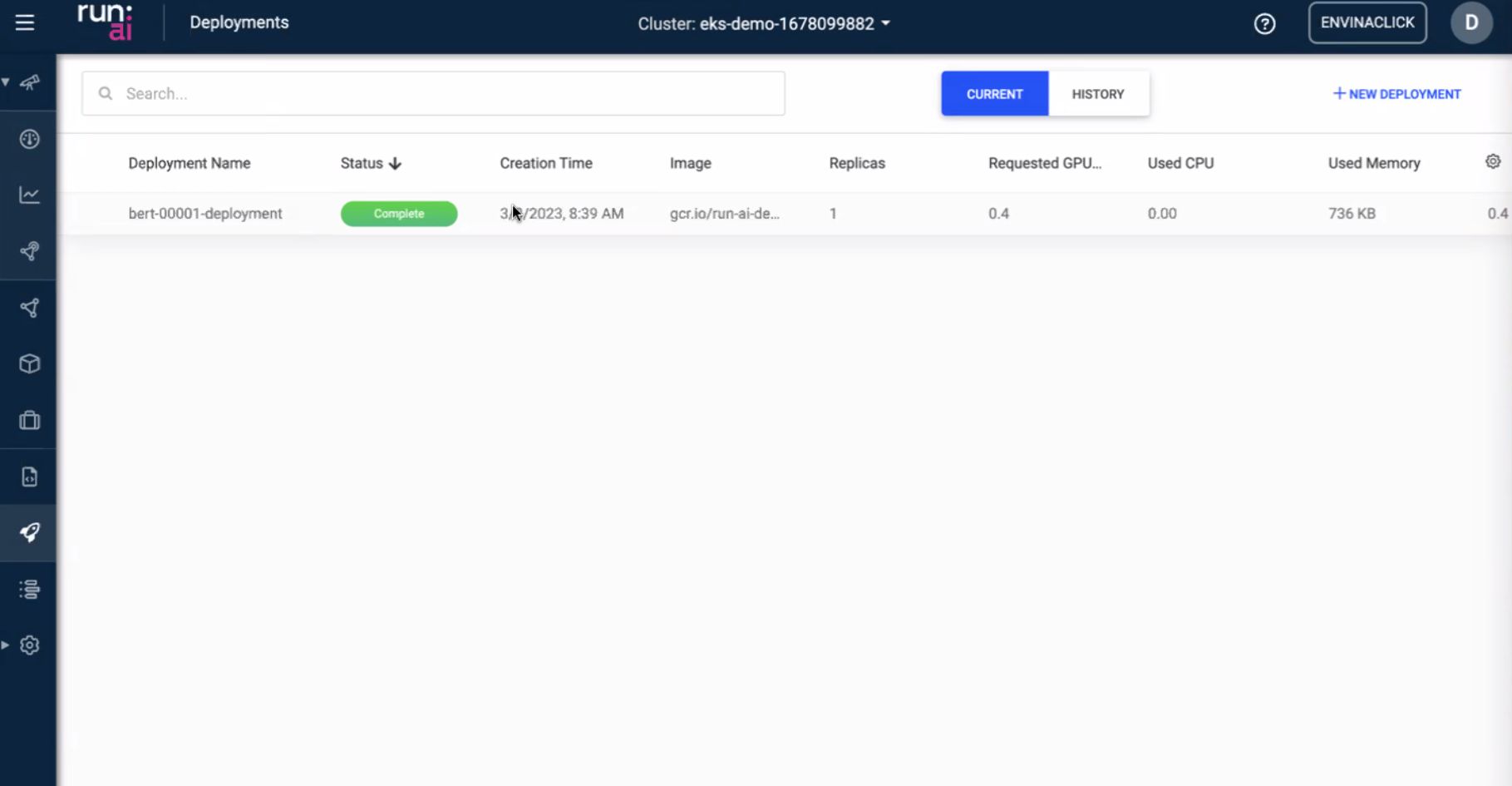

Dashboard

Overview of jobs and resource usage.

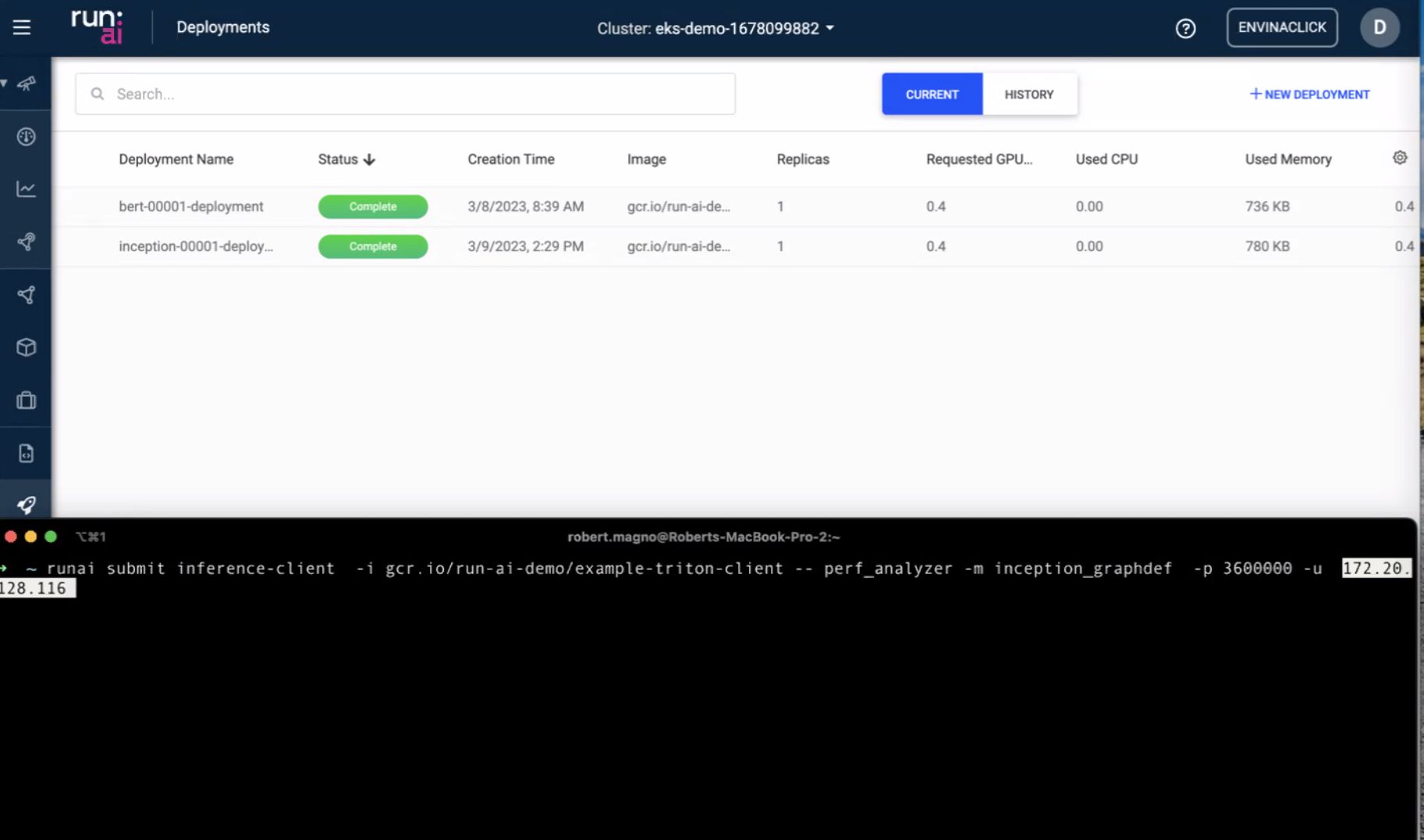

CLI

Command-line operations and automation.

Models and load

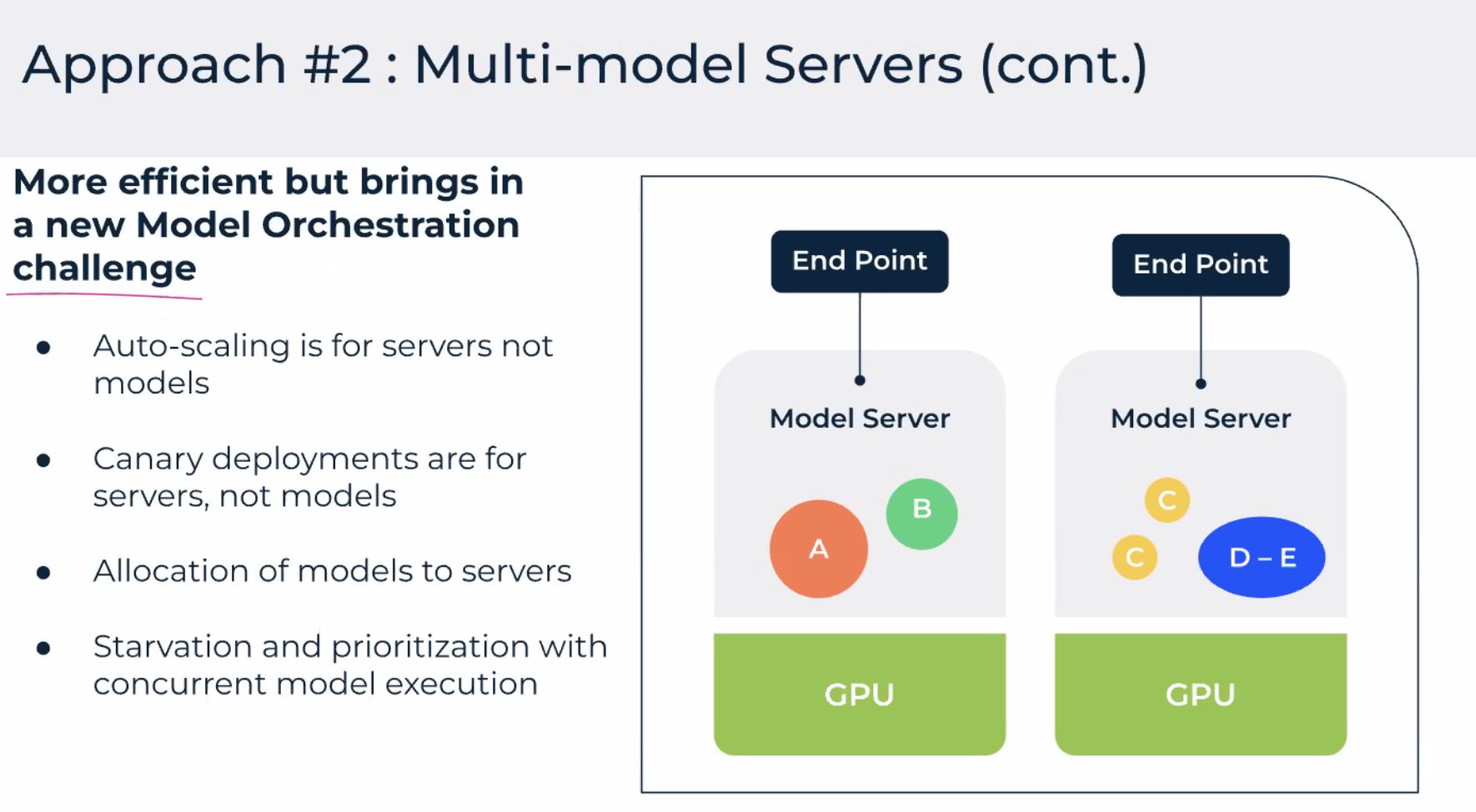

Workload management

Infrastructure view

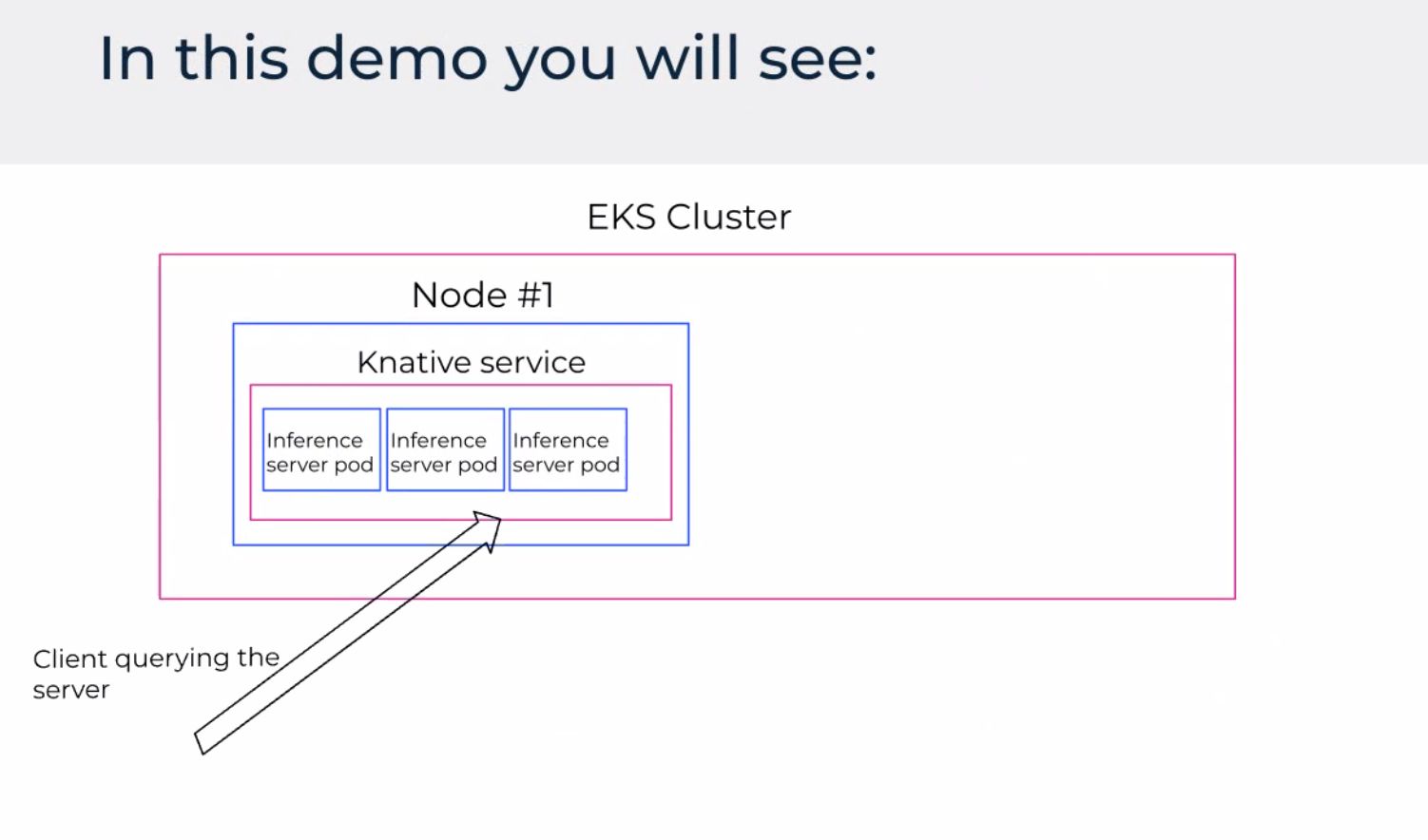

Demo

Challenges

For product details, see the official Run:ai documentation and AWS marketplace or partner listings.